Nvidia Blackwell:

The GPU That Revolutionizes AI

With its 208 billion transistors, innovative memory management and cutting-edge energy efficiency systems, NVIDIA Blackwell is transforming the way companies of all sizes tackle the challenges of modern AI. This new technological marvel, capable of performing true ‘magic’, is the latest product of Jensen Huang’s vision for the technological future, founder and CEO of the Californian giant. In this article, we will explore what makes it the ‘steam engine’ of the digital industrial revolution.

What NVIDIA Blackwell is and why it changes the game.

NVIDIA Blackwell is the latest evolution of graphics chips (GPU, Graphics Processing Unit) that, not so long ago, were almost exclusively dedicated to rendering visuals in video games. Today, this technology has evolved into a fully-fledged ‘ecosystem’ (*1), designed to handle the ever-growing demands of Artificial Intelligence.

Built on 208 billion 4-nanometer transistors (*2) in the GB100 chip, Blackwell’s architecture leverages the most advanced generation of tensor cores (*3), optimised for the most complex operations, particularly those involving deep learning. Among its key innovations is HBM3e memory, featuring unprecedented bandwidth (loosely speaking, ‘speed’), enabling the processing of billions of parameters without any slowdown. This allows it to excel both in the ‘training’ phase, where AI learns, and the ‘inference’ phase, where it applies that knowledge.

Notes

*1: ‘Ecosystem’ refers to an integrated system that encompasses, beyond the hardware (the GPU itself), software, development tools, connectivity technologies and cloud services, all working in concert to optimise AI performance.

*2: To put it into perspective, a ‘nanometre’ is one millionth of a millimetre!

*3: ‘Tensor cores’ are processing units engineered to accelerate complex mathematical operations.

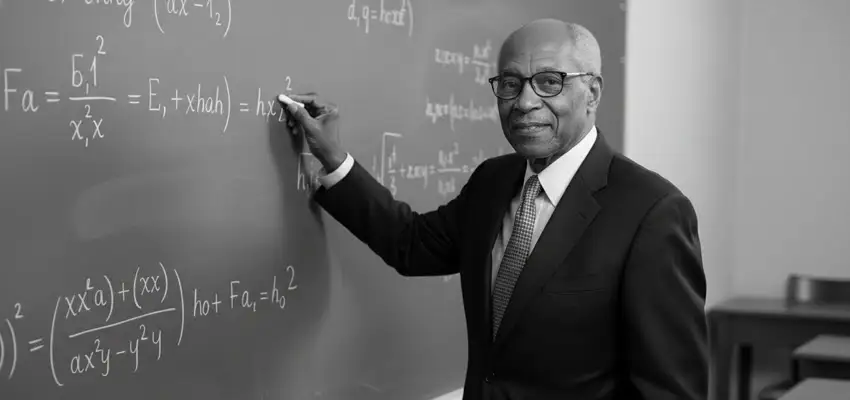

The origins of the name ‘NVIDIA Blackwell’.

The name NVIDIA Blackwell pays tribute to David Harold Blackwell (1919–2010), an African-American statistician and mathematician whose scientific contributions revolutionised the fields of game theory, probability theory and statistics. He was the seventh African-American to earn a Ph.D. in mathematics, going on to serve as president of the Institute of Mathematical Statistics in 1955–1956 and receiving the prestigious John von Neumann Theory Prize in 1979.

Read more

This choice is no coincidence: NVIDIA has a long-standing tradition of naming its most significant GPU architectures after great scientists and mathematicians: from Turing and Maxwell to Pascal, Volta and Hopper, all pivotal figures in the history of science and computation.

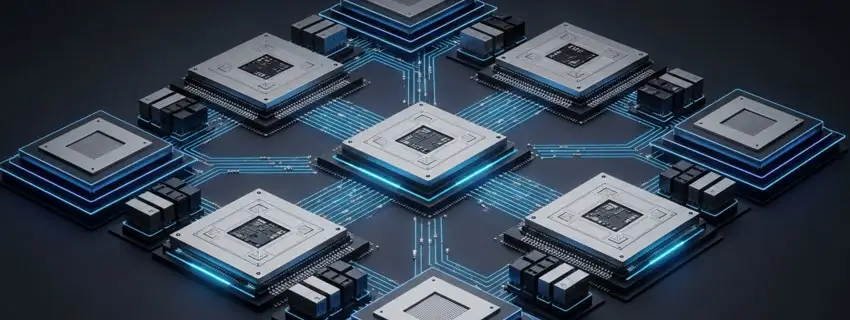

NVIDIA Blackwell: The Modular Architecture Powering AI.

The true strength of NVIDIA Blackwell lies in its modular architecture: unlike traditional GPUs, it’s built around highly specialised chips known as ‘chiplets’, interconnected via NVLink-C2C. Some are dedicated to matrix calculations, essential for the functioning of neural networks (which in turn underpin artificial intelligence models), while others handle shared memory across multiple units. This design approach significantly reduces latency, that is, the delay in data transfers between components , speeding up complex operations such as ‘attention’ mechanisms used by AI transformer models (*1).

Read more

Another key innovation is the integrated data decompression engine, which enables the system to work directly on compressed data within memory, without having to fully decompress it beforehand: this greatly enhances efficiency and can cut the time required to complete highly demanding computational processes from days to just a few hours. Finally, the ultra-fast HBM3e memory ensures that no component becomes a bottleneck during the most intensive operations.

Note:

*1: ‘Attention’ in ‘transformer’ models is the mechanism that allows AI to identify which parts of a given input are most relevant, enabling it to grasp the overall meaning.

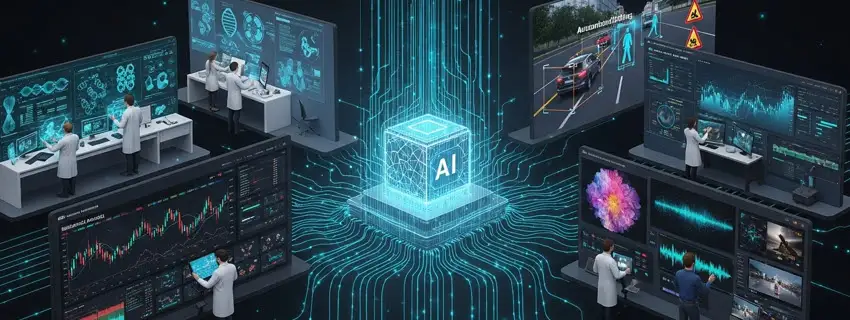

Practical applications: where NVIDIA Blackwell is making a difference.

NVIDIA Blackwell is revolutionising several crucial sectors. In healthcare, for instance, it’s significantly accelerating the simulation of protein interactions, enabling researchers to discover new drugs in record time while drastically shortening the preclinical research phase. In the field of computer vision (the ability of an AI to ‘see’ and interpret images), models trained on this architecture are allowing autonomous vehicles to process complex driving scenarios with greater accuracy. In the financial sector, various institutions are leveraging this computational power to analyse market trends using advanced predictive models.

Read more

Perhaps the most compelling application involves generative AI (Artificial Intelligence that creates new content): professionals are already using the GPU to produce hyperrealistic videos and images at a quality and speed that would have been unthinkable until recently.

Thanks to this architecture, what once seemed like science fiction is becoming concrete reality at a surprisingly rapid pace.

NVIDIA Blackwell vs Hopper: a significant generational leap.

Comparing NVIDIA Blackwell with its predecessor, Hopper, reveals significant differences both in terms of architecture and, consequently, performance. When the Hopper architecture was announced in 2022, the ‘transformer engine’ was introduced for the first time: it consisted of an internal GPU mechanism that speeds up data processing in generative AI models, thus reducing computational times. Just a few years later, Blackwell refines this accelerator, delivering far superior performance.

Read more

In terms of energy consumption, the new architecture is also significantly more efficient, a crucial advantage in an era where the energy demands of data centres have become a critical factor. This level of optimisation means that companies and organisations can achieve substantially higher performance while keeping energy consumption in check, aligning with global sustainability goals.

NVIDIA Blackwell effectively redefines the economics of artificial intelligence through an approach grounded in scalability and resource optimisation.

Jensen Huang’s vision: the driving force behind NVIDIA Blackwell.

Behind every technological leap at NVIDIA stands Jensen Huang, co-founder (alongside Chris Malachowsky and Curtis Priem) and CEO of a company that, from a leading producer of graphics cards for video games, has transformed itself into a global leader in Artificial Intelligence.

This remarkable evolution is the result of a three-decade journey shaped by the philosophy of this visionary: innovation as a means of anticipating the future, rather than merely adapting to it.

Huang’s ability to read market trends ahead of competitors has allowed NVIDIA to assume a role that goes far beyond that of a simple chip supplier, becoming the architect of the entire modern AI ‘ecosystem’.

Energy efficiency: NVIDIA Blackwell’s competitive edge.

The true revolution of NVIDIA Blackwell is not measured solely in teraflops (the unit used to quantify a processor’s computational power), but also in energy efficiency. Its innovative architecture introduces advanced dynamic power management systems at the chip level, significantly reducing consumption during less intensive processing phases.

Read more

This GPU also supports advanced cooling solutions, including liquid cooling as implemented in server systems such as the GB200 NVL72, capable of maintaining optimal operating temperatures even under heavy workloads: this not only extends the lifespan of the component, but also considerably lowers the costs associated with heat dissipation in data centres.

Inference performance: the critical economic factor.

If ‘training’ is the phase in which AI ‘learns’ from the information made available to it, ‘inference’ is the moment in which it ‘acts’ on that information, responding to our questions. And it is precisely at this specific stage that NVIDIA Blackwell flexes its muscles, effectively revolutionising the ‘price list’ of artificial intelligence. One of the biggest challenges facing current language models (the so-called LLMs, such as ChatGPT, Gemini and Claude) is the enormous energy cost of running them day after day while responding to millions of users: well, the architecture of the new GPU has been designed specifically to address this problem, delivering inference performance far superior to that of previous generations. This efficiency allows companies to serve a far greater number of generative AI requests, while keeping costs under control.

NVIDIA Blackwell for small businesses: democratising AI.

Long associated with technology giants, NVIDIA Blackwell is surprisingly transforming the world of SMEs as well: through partnerships with cloud computing providers such as AWS and Google Cloud, smaller companies can now rent GPU instances on demand, paying only for actual usage, with no need for multi-million-dollar upfront investments.

For example, a marketing agency can generate content for its clients in a fraction of the time, a medically-focused startup can run simulations that until recently required enormously costly infrastructure.

This level of accessibility is giving rise to a new generation of professionals capable of leveraging cutting-edge AI as a concrete tool in their daily work.

The Connectivity Revolution with NVLink.

Having a single, extremely powerful GPU is of limited value if it cannot communicate effectively with all the others within a data centre: it’s precisely for this reason that the launch of NVIDIA Blackwell was accompanied by the introduction of NVLink, a fifth-generation interconnect technology.

This system acts as a kind of ‘nervous system’, allowing hundreds of chips to exchange data at remarkable speeds (*1). Such integration makes it possible to treat entire server clusters as a single computational entity, addressing the latency issues that so often hinder large-scale AI projects.

Nota:

*1: Up to 576 chips in the NVL72 system;

Blackwell and the future of the film industry.

The film industry is undoubtedly one of the fields where Artificial Intelligence is having the greatest impact. For many years now, studios such as Industrial Light & Magic have recognised the value of new technologies, using NVIDIA GPUs to optimise their workflows. With Blackwell, the possibilities will multiply: the ability to process billions of parameters in real time will make it possible, for example, to dramatically accelerate the processing of visual effects, rendering and compositing. For the ‘dream factories’, this will translate into shorter production times and, above all, greater creative freedom, as the computational infrastructure will be able to keep pace even with the imagination of the most daring storytellers.

What does the future hold after NVIDIA Blackwell?

While the technology world is still getting used to the ‘magic’ of NVIDIA Blackwell, NVIDIA’s laboratories are already working on the next generation of GPUs, whose name has already been announced: ‘Rubin’, a clear reference to the American astronomer specialised in dark matter and galaxy rotation. Early indications suggest that performance and, above all, energy efficiency will continue to be the top priorities.

Copyright information.

The images on this page were created using generative Artificial Intelligence tools.